Biometric data and the law — GDPR, BIPA, CCPA, and what 'on-device only' actually means

- FaceClock Team

- Privacy

- April 22, 2026

Table of Contents

This article exists because I keep getting the same email.

It usually starts: “We were thinking of using face-based time tracking, but our HR person flagged that we’d have to comply with [law]. Is that going to be a nightmare?”

The short answer: it depends entirely on whether the biometric data leaves the device. The longer answer involves three laws, a couple of court cases, and a useful distinction that most vendors are vague about. So let’s walk through it carefully.

I’ll try to keep this to plain English. None of this is legal advice — if you have a real deployment with real risk, get a real lawyer. But I want to give you enough to know what questions to ask.

What counts as biometric data, in the laws that matter

Three regulatory regimes show up most often in this conversation: GDPR (EU), BIPA (Illinois, US), and CCPA/CPRA (California, US). They define biometric data in similar but not identical ways.

GDPR treats biometric data as a special category of personal data under Article 9. Specifically: “personal data resulting from specific technical processing relating to the physical, physiological, or behavioural characteristics of a natural person, which allow or confirm the unique identification of that natural person.”

BIPA (the Illinois Biometric Information Privacy Act) defines a “biometric identifier” as a “retina or iris scan, fingerprint, voiceprint, or scan of hand or face geometry.” Importantly, BIPA also covers “biometric information” — anything derived from those identifiers, like a face embedding vector.

CCPA/CPRA (California) treats biometric information broadly: “physiological, biological, or behavioral characteristics… [used] to establish individual identity.” This includes faceprints, voiceprints, and other measurements derived from a face.

For our purposes, three things follow from these definitions:

- A photograph of a face, if used to identify someone, is biometric data under all three.

- A face embedding (the vector that face-recognition software computes from an image and uses for matching) is biometric data under all three.

- Even if you delete the original photos, retaining the embedding still counts as retaining biometric information.

Once you accept that you’re handling biometric data, the question becomes: what do these laws actually require?

What the laws require, briefly

I’ll skip the long form and give you the core obligations. Note: there are exceptions, edge cases, and jurisdictional carve-outs. The point here is to give you the shape of what’s expected.

Under GDPR (for biometric data used to identify someone — Article 9):

- You need a lawful basis. For employment, this is rarely “consent” (because employment imbalances make consent shaky). It’s more often a substantial public interest, vital interests, or — in some Member States — explicit national legislation that authorizes biometric processing for time-and-attendance.

- You must conduct a Data Protection Impact Assessment (DPIA).

- You must apply data minimization (only collect what’s necessary).

- You must implement appropriate technical and organizational security measures.

- You must inform the data subject in clear language.

- You must respect data-subject rights (access, deletion, portability where applicable).

Under BIPA (Illinois):

- Written informed consent before collection.

- A publicly available, written retention and destruction policy.

- A reasonable retention schedule — and biometric data must be destroyed when the initial purpose is complete or after 3 years, whichever comes first.

- No selling, leasing, or trading biometric data.

- Reasonable care in storing, transmitting, and protecting biometric data.

The penalty structure under BIPA is what gets attention: $1,000–$5,000 per violation, and Illinois courts have interpreted “violation” liberally, leading to several large class-action settlements. (Six Flags, White Castle, and others.)

Under CCPA/CPRA (California):

- Notice at collection (what you’re collecting and why).

- A right to know what’s been collected.

- A right to deletion.

- A right to opt out of sale or sharing.

- For “sensitive personal information” (which includes biometric data used for identification), a right to limit use.

There are more obligations across all three, but those are the operational core.

How “on-device only” changes the calculation

Here’s the distinction that matters most, and that vendors often gloss over.

Cloud-based biometric processing means the device takes a photo, sends it to a server, the server computes the embedding and does the match, and sends the result back. This is how most “easy to deploy” face-recognition products work. The biometric data is in transit and at rest in the vendor’s cloud. The vendor is a data processor at minimum, often a controller.

On-device biometric processing means everything happens locally. The phone or tablet captures the image, computes the embedding using a model bundled with the app, compares it to embeddings already stored on that device, and produces a match or non-match. No biometric data ever leaves the device. There is no server. There is no transmission.

These two architectures have very different legal profiles, and the laws — to varying extents — recognize this.

Under GDPR, on-device-only processing significantly reduces the “controller” role for the software vendor (because they have no access to the data) and tightens the focus on the deploying organization. The DPIA still applies; the lawful basis still applies. But the risk surface is dramatically smaller because there’s no cross-border transfer, no third-party processor relationship, no cloud breach risk.

Under BIPA, the “transmitting” element is a separate concern — Section 15(d) requires consent for disclosure of biometric data, and on-device-only processing means there’s no disclosure to anyone except the device. Several BIPA cases have hinged on whether data was transmitted off-device; on-device-only architectures are markedly less exposed.

Under CCPA/CPRA, the “sale” and “sharing” risks largely vanish in an on-device architecture (because there’s nothing to sell or share). The “right to know” and “right to deletion” become operational concerns for the device administrator, not the software vendor.

This isn’t a magic-bullet legal story. It just means the regulatory weight gets distributed differently, and the parts that scared people most about biometric processing — third-party access, cross-border transfers, breach-at-vendor risk — mostly disappear.

What “on-device only” needs to actually mean to count

A few things I see vendors mislabel:

“Edge processing” is not always on-device. Some products say “edge” but actually mean “the embedding is computed in your office, then transmitted to our cloud for matching.” That’s still cross-border-eligible biometric data flow under GDPR.

“We delete photos immediately” doesn’t help if you keep the embedding in the cloud. The embedding is biometric information under all three regimes.

“No biometric data is stored on our servers” doesn’t help if the data is transmitted in real time. Transmission is its own regulated event, especially under BIPA Section 15(d).

For a vendor to legitimately claim on-device-only processing, all of these have to be true:

- The face matching model runs on the device (typically as a quantized neural net using the device’s NPU).

- Embeddings are stored only in the app’s local storage.

- No network connection is required for matching to function.

- There’s no telemetry that includes biometric data — even hashed.

- The architecture works with airplane mode on, indefinitely.

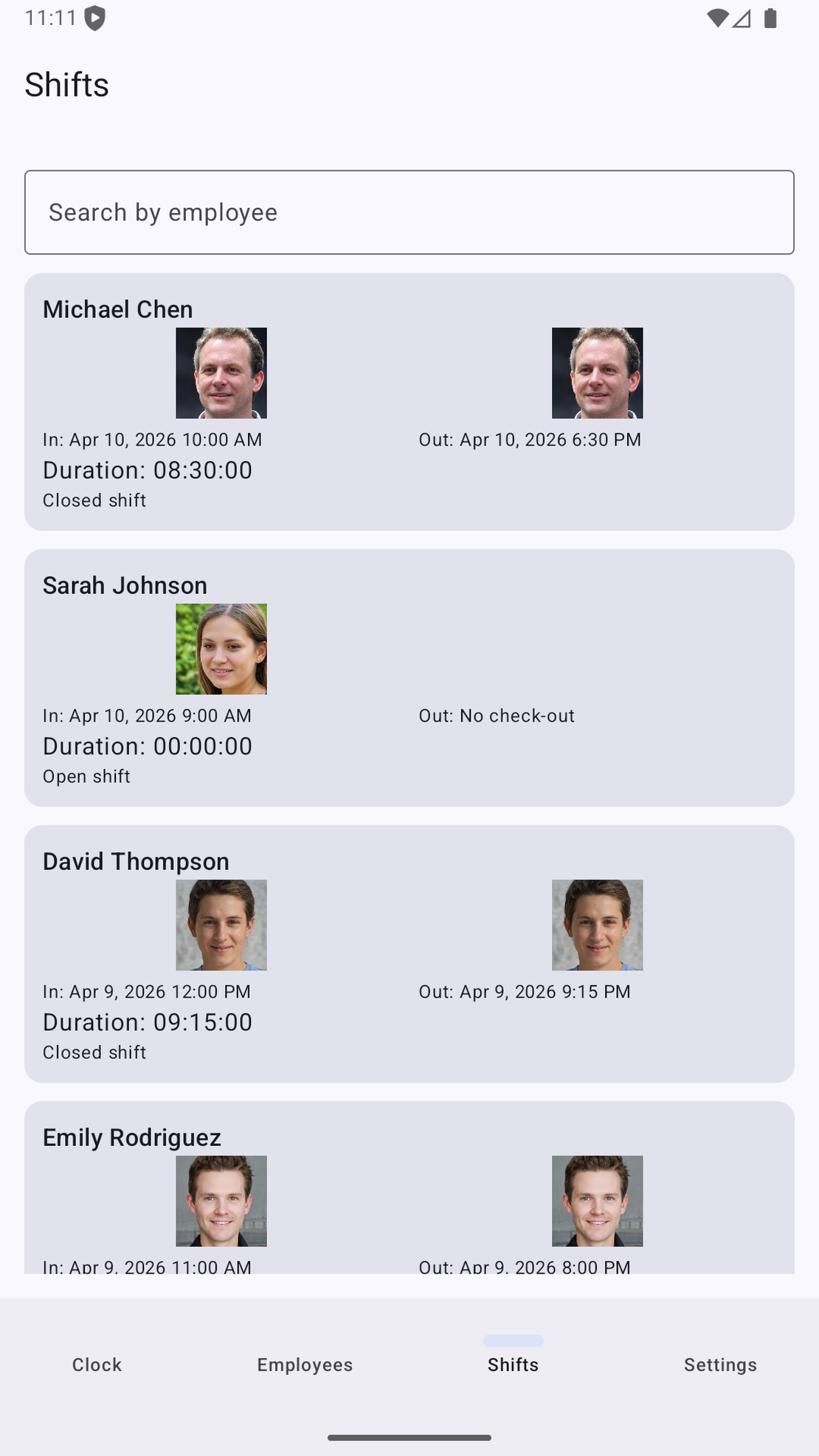

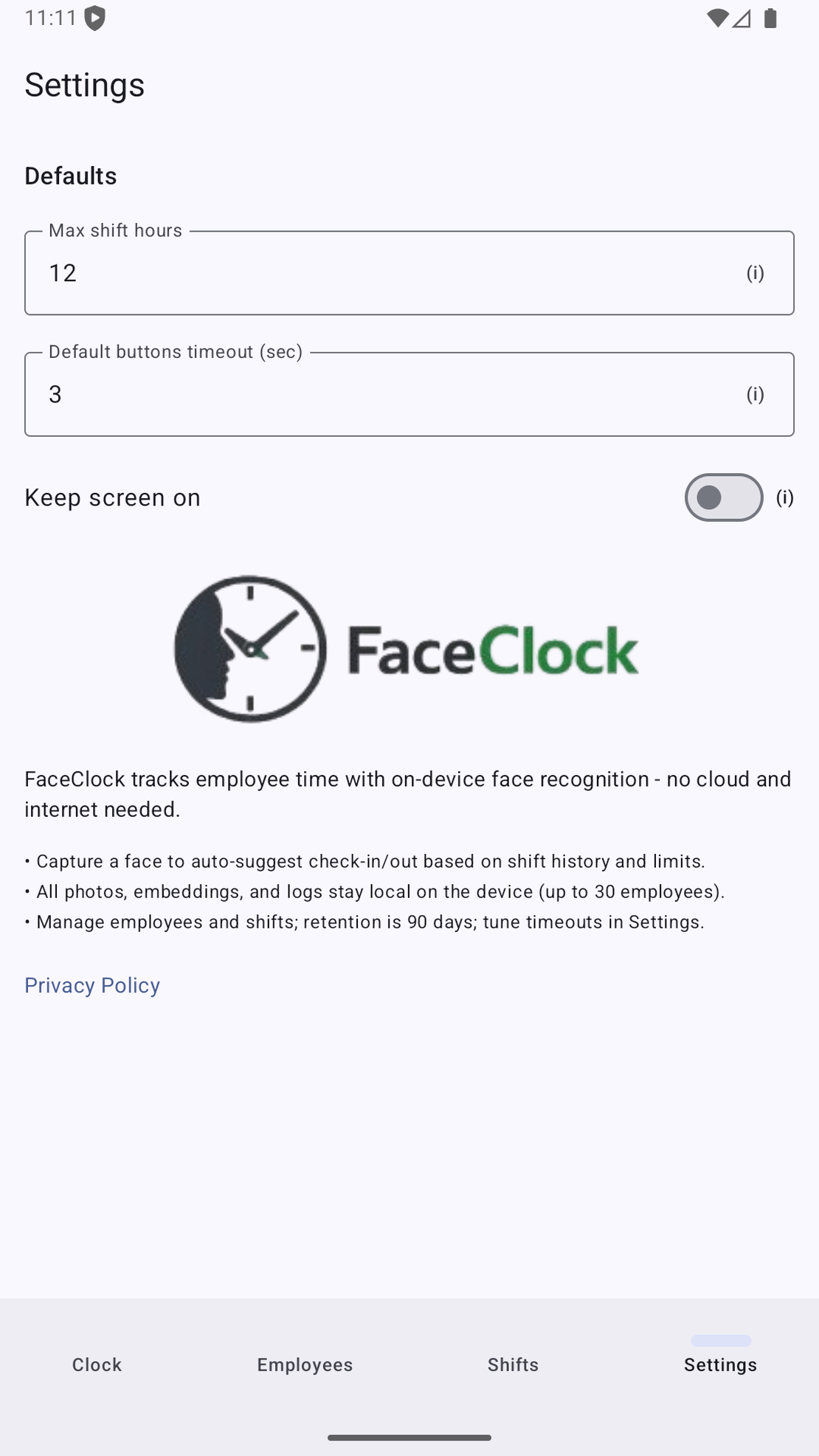

You can verify this for an open-source app like FaceClock by reading the code or running it in a network-isolated test. For a closed-source app, you’re trusting the vendor’s documentation.

What you, as a deployer, still have to do

Even with the cleanest on-device-only architecture, the organization deploying the app remains a data controller. That means you still need to:

Decide your lawful basis (or equivalent). Under GDPR, this is usually the most carefully argued bit. In some EU countries, time-and-attendance with biometrics has been explicitly authorized by national law; in others, it’s contested. Get local advice.

Write a clear notice. What are you collecting, why, where does it live, how long do you keep it, who has access?

Get consent appropriately. Under BIPA, this is written and informed. Under GDPR, consent is a weak basis for employment relationships, so it’s usually paired with another basis. Under CCPA, notice-and-opt-out is the structural pattern.

Set retention rules. FaceClock’s default of 90 days for shift records, with employee profiles retained until manually removed, is a reasonable starting point — but check it against your local rules. (Germany, for instance, has specific timekeeping retention requirements that can override shorter periods.)

Document what you’ve done. Especially for GDPR, the ability to show you’ve done a DPIA matters as much as the DPIA itself.

Have a deletion process. When an employee leaves, can the device administrator clearly remove that person’s biometric data? FaceClock supports this with one button. Many cloud tools require an emailed support request.

Three things that often surprise people

1. “Consent” is not the silver bullet it looks like. Especially in the EU. Power-imbalanced relationships (employer/employee) make consent intrinsically suspect, and a court reviewing whether your consent was freely given may be skeptical. Other lawful bases — legitimate interests, contractual necessity, statutory authorization — often hold up better.

2. Local backups can re-introduce the cloud problem. If your employees’ device backs up to Google Cloud or iCloud automatically, the biometric data may be sitting in someone’s cloud whether you wanted it to or not. Disable app-level backup or check with your administrator.

3. The retention clock starts when the purpose ends. Under BIPA’s 3-year rule, the clock runs from the initial purpose’s completion — which for a time-clock app is usually the end of the employment relationship. Employees who left two years ago may still have biometric data on the device, even if you stopped using their data the day they left. Periodic cleanup matters.

The reality, as I’ve seen it

I’ve helped a handful of organizations deploy face-based time tracking. None of them had a difficult time with the law, once they got past the initial alarm. The combination of (a) on-device-only architecture and (b) a clear written notice and consent flow was enough for every one of them.

The hard cases tend to involve:

- Multi-jurisdictional deployments where the rules vary across sites.

- Industries with sector-specific rules layered on top (healthcare, financial services).

- Unionized workplaces where collective agreements impose additional process.

The easy cases — a single cafe, a single dental practice, a single small workshop — are usually a one-page consent form and a paragraph in the employee handbook.

A modest recommendation

If you’re considering biometric time tracking, do two things in this order:

First, make sure the architecture is genuinely on-device-only. This eliminates 80% of the legal complexity. Verify, don’t trust the marketing copy.

Second, get fifteen minutes with a local employment lawyer to confirm your specific deployment is fine in your jurisdiction. The cost is small. The peace of mind is large.

If the architecture is right and the local advice checks out, you’re done. The “biometric privacy nightmare” most people are afraid of is a nightmare that’s specific to cloud-based processing — not to face recognition itself.